Your AI demo worked. Now what?

The question we're getting asked a lot right now is some version of: "Can AI help us do this?" Sometimes yes. Sometimes not yet. Sometimes yes — but maybe not in the way you're imagining.

What's become clear is that most teams are still building a shared language for what these projects actually involve.

Tool names get thrown around freely — Claude, Cursor, ChatGPT, n8n — but the harder conversation is about what you're actually trying to build, and what that requires. Here's the framing and frameworks we're leaning into...

Types of AI projects (for example)

1/ AI Agent - A knowledgeable assistant with a defined role and clear limits. Ask it something, it responds, drawing only on the brief and knowledge files you've given it. Also called: virtual assistant, knowledge bot, copilot, AI assistant. Good for: internal knowledge tools, onboarding assistants, compliance checkers, sales enablement.

2/ AI-Enhanced Automated Workflow - A triggered sequence of tasks where AI handles steps that are too variable or judgement-heavy for traditional automation. The difference from a standard Zapier flow: the same input doesn't always produce the same output, because the AI layer is making calls. Also called: agentic workflow, AI automation, AI pipeline. Good for: lead enrichment, report generation, alert routing, content summarisation.

3/ AI-Powered App - A custom product with a proper interface where AI does meaningful work under the hood. Not a chatbot. A tool built around AI capability. Also called: AI-native app, intelligent application, AI-enabled platform. Good for: client-facing tools, dashboards with AI-generated insights, personalised recommendation engines.

4/ Multi-Agent System - Multiple AI assistants working together, each with a specific role, handing off to each other as work progresses. Also called: MAS, agentic system, agent orchestra. Good for: end-to-end process automation, complex research workflows, operations requiring multiple specialisms in sequence.

Most engagements start with type 1 or 2. Types 3 and 4 typically follow once a team has seen what good looks like and wants to expand. Worth noting: the lines between 2, 3, and 4 blur in practice — which is itself evidence that the shared language is still being built.

What it actually took — a real example

One of our internal rebuilds this quarter is a good illustration of how we're approaching AI workflows — not "point the model at the problem" but designing the decomposition first. The deliverable is our Axioned Findings & Opportunities Report — a website audit we send to prospects and clients covering tech stack, site speed, SEO, security, and UX. Historically this was a multi-person effort: different specialists ran different tools, wrote different sections, and someone stitched it together.

We rebuilt it as a plugin on Claude Code with a deliberate structure. An orchestrator agent owns the engagement — scope, inputs, validation, review. It talks to the human. It does not run audits. A parent skill assembles the final report — order of operations, tone, length, structure. It does not audit. Five specialist skills do the actual work, one per domain, each with its own tools and reference material, each writing its section to a temp file. A formatting skill handles branded Word output separately, so brand changes touch one file. And a data layer calls real tools — PageSpeed, CrUX, Mozilla Observatory — so numbers in the report are measured, not invented.

There's also a fallback path: if the orchestrator fails, sub-skills run directly; if a sub-skill fails, the report continues with that section skipped. The shape mirrors how you'd staff the work with people — one owner, one assembler, five specialists, one formatter, one data source. That's the lever. The model is the worker, not the architect.

Want to see this in action on your own site? Submit your URL and we'll take care of the rest: https://form.typeform.com/to/GuqNiSEf

When the demo works but production doesn't

Teams working in this space often hit a specific wall: experiments that work in demos but can't produce auditable decisions at scale. The issue is often that the AI has been given free-floating context rather than structured, traceable knowledge to work from.

A practical test for whether you're there: ask the same question, or run the same process, twenty times. If you get twenty meaningfully different answers, perhaps you don't yet have something you can trust or reliably use.

Many teams discover this later than they'd like — after the demo has gone well and someone asks for it in production.

The discipline for solving it has its own vocabulary...

- An ontology is a structured way of defining concepts and the relationships between them — OWL (Web Ontology Language, a W3C standard) is one of the formal frameworks for building them.

- A knowledge graph maps those relationships so an AI can navigate them reliably.

- A controlled vocabulary — often structured using SKOS (Simple Knowledge Organization System, also a W3C standard) — tags your data with consistent, agreed-upon labels so the AI always knows what it's working from.

- Then there's the question of whether it's actually working: evals are structured test scenarios that measure whether outputs meet defined criteria — reliably, across inputs.

- Structured knowledge tells the AI what to work from. Evals tell you whether it's working correctly.

The point isn't to become an expert in any of this. It's to recognise when your AI project needs such expertise — because most teams don't see the messy middle coming until they've already hit it.

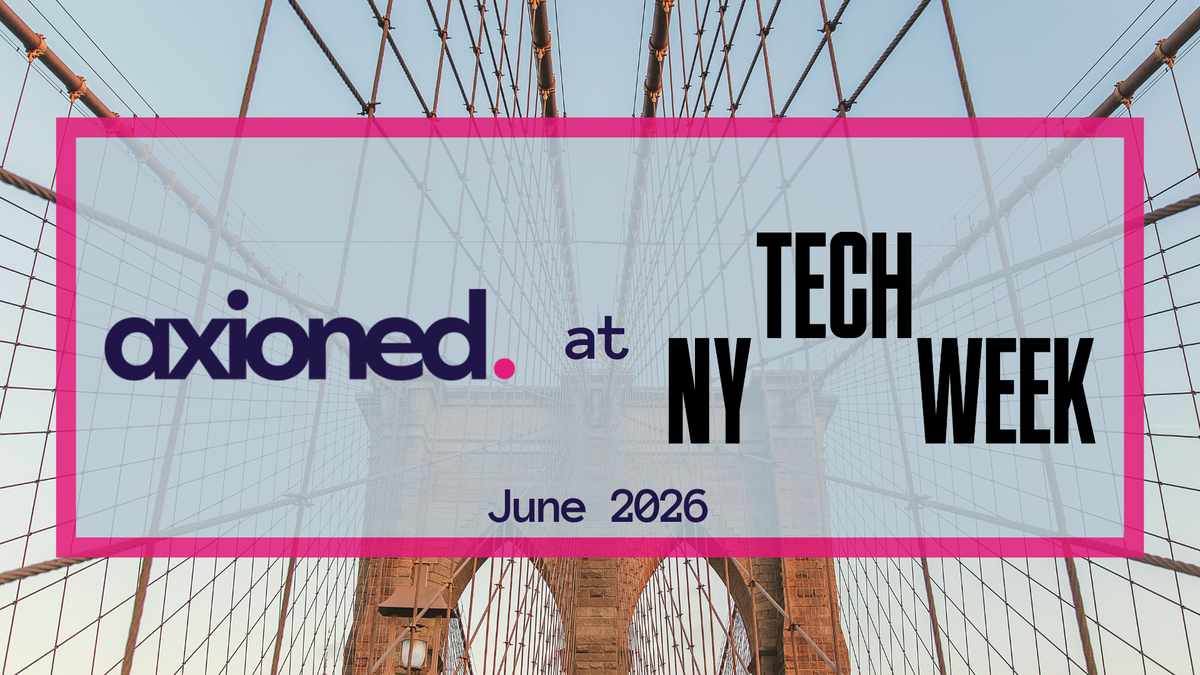

#NYTechWeek 2026

This 👆 is the conversation we're bringing to our NY Tech Week event — and we'd love for you to be part of it.

Join us?

When/Where: Tuesday, June 2nd, 6pm, Gramercy Park/NYC